Blog

Jan 20, 2026

The regulatory environment for AI in wealth management has shifted dramatically. The SEC's 2026 examination priorities signal a fundamental change: AI oversight has been integrated across virtually all examination categories. The Division of Examinations will review registrants' compliance policies regarding AI-related services, their disclosures to investors, and the accuracy of any AI capability claims.

Building trustworthy advisory technology requires more than algorithmic sophistication. It demands architecture designed from the ground up for compliance, transparency, and accountability.

The Regulatory Landscape: What RIAs Must Address

Morrison Foerster's 2025 compliance guidance outlines the framework: RIAs using AI tools must implement policies and procedures reasonably designed to prevent violations of the Investment Advisers Act. This includes addressing AI hallucinations, data privacy, conflicts of interest, recordkeeping, and marketing claims.

The SEC has already charged investment advisers for making false or misleading statements about AI capabilities—a practice known as "AI washing." Key regulatory expectations for 2026 include:

Fiduciary compliance: AI tools must support, not replace, fiduciary obligations of care and loyalty

Transparency requirements: Clear disclosure of AI use in investment advice and client services

Risk management: Documented processes for identifying and mitigating AI-specific risks

Vendor oversight: Due diligence and ongoing monitoring of third-party AI providers

Client data protection: Robust safeguards for confidential information used in AI systems

Framework 1: Compliance-Ready AI Workflows

CFA Institute's 2025 research emphasizes that successful AI implementation requires close collaboration across organizations, balancing innovation with transparency and accountability.

Governance Structure

Effective AI governance starts with clear ownership:

Establish AI oversight committees with representation from compliance, technology, legal, and investment teams

Define approval hierarchies for AI tool deployment, particularly for client-facing applications

Create escalation protocols for AI anomalies, errors, or regulatory concerns

Document decision-making authority at each stage of the AI lifecycle

Risk Assessment Process

Before deploying any AI tool, conduct structured assessment:

Identify use case scope: Document exactly what the AI system will and won't do

Map regulatory touchpoints: Determine which regulations apply

Assess potential harms: Consider hallucination risks, bias, data privacy, and conflicts

Establish risk thresholds: Define acceptable error rates and performance parameters

Plan mitigation strategies: Document how identified risks will be addressed

Policies Under Rule 206(4)-7

Rule 206(4)-7 requires RIAs to adopt written policies reasonably designed to prevent violations. For AI systems:

AI-specific compliance policies addressing unique risks beyond traditional technology

Vendor management protocols including provider selection, contract requirements, and monitoring

Data governance standards specifying what client data can be used and how it's protected

Testing and validation procedures for AI outputs before they influence investment decisions

Incident response plans for AI failures, errors, or unexpected behaviors

Human Oversight Requirements

AI augments human judgment; it doesn't replace fiduciary responsibility:

Review layers for AI recommendations before investment decisions

Exception handling protocols when AI outputs contradict human judgment

Override documentation requirements

Periodic validation that AI systems continue performing as intended

Framework 2: Explainability and Traceability

McKinsey's research notes that algorithmic opacity raises regulatory and trust concerns, making explainable AI frameworks essential.

Building Explainable Systems

Explainability requires demonstrating how decisions get made:

Input transparency: Document what data feeds AI models and how it's processed

Logic documentation: Explain the general methodology

Output interpretation: Provide context for AI recommendations in plain language

Confidence indicators: Display certainty levels for AI-generated insights

Alternative scenarios: Show how different inputs would change outputs

Traceability Architecture

Regulatory examinations will ask: "How did you arrive at this recommendation?" AI systems must support answers through:

Audit trails for AI decisions with timestamps and logs

Version control tracking which model produced which outputs

Data lineage recording what informed specific AI decisions

Decision reconstruction ability

Change logs for model updates or parameter adjustments

Practical Implementation

Technology choices matter:

Select AI vendors with built-in audit capabilities

Implement middleware that logs AI interactions

Create compliance dashboards showing usage patterns and error rates

Build testing environments for validation before deployment

Establish documentation standards capturing design rationale

Framework 3: Regulatory Expectations for Secure AI Use

White & Case's analysis notes that examinations will scrutinize policies for data loss prevention, access controls, and AI-related incident responses.

Data Privacy and Confidentiality

When AI enters the equation:

Vendor contracts must prohibit using client data for model training

Use only necessary client information (data minimization)

Strip identifying information from training data when practical

Inform clients how their data will be used in AI systems

Consider opt-out mechanisms for AI-driven features

Access Controls

Who can deploy AI tools matters:

Role-based access limiting AI system use to personnel with legitimate business need

Approval workflows requiring compliance sign-off for new deployments

Segregation between model development and production deployment

Vendor access restrictions controlling third-party AI provider permissions

Testing and Validation

Secure AI requires ongoing validation:

Pre-deployment testing against known scenarios

Bias detection for systematic errors or discriminatory outcomes

Stress testing under market volatility

Periodic revalidation (quarterly, annually, post-market events)

Third-party validation for critical systems

Practical Compliance Integration

Documentation Standards

Create standardized documentation for each AI system covering:

System purpose and scope

Data sources and processing methods

Model methodology

Validation approach

Risk assessment and mitigation

Human oversight protocols

Vendor information and contract terms

Training and Competency

CFA Institute research emphasizes that staff need training on:

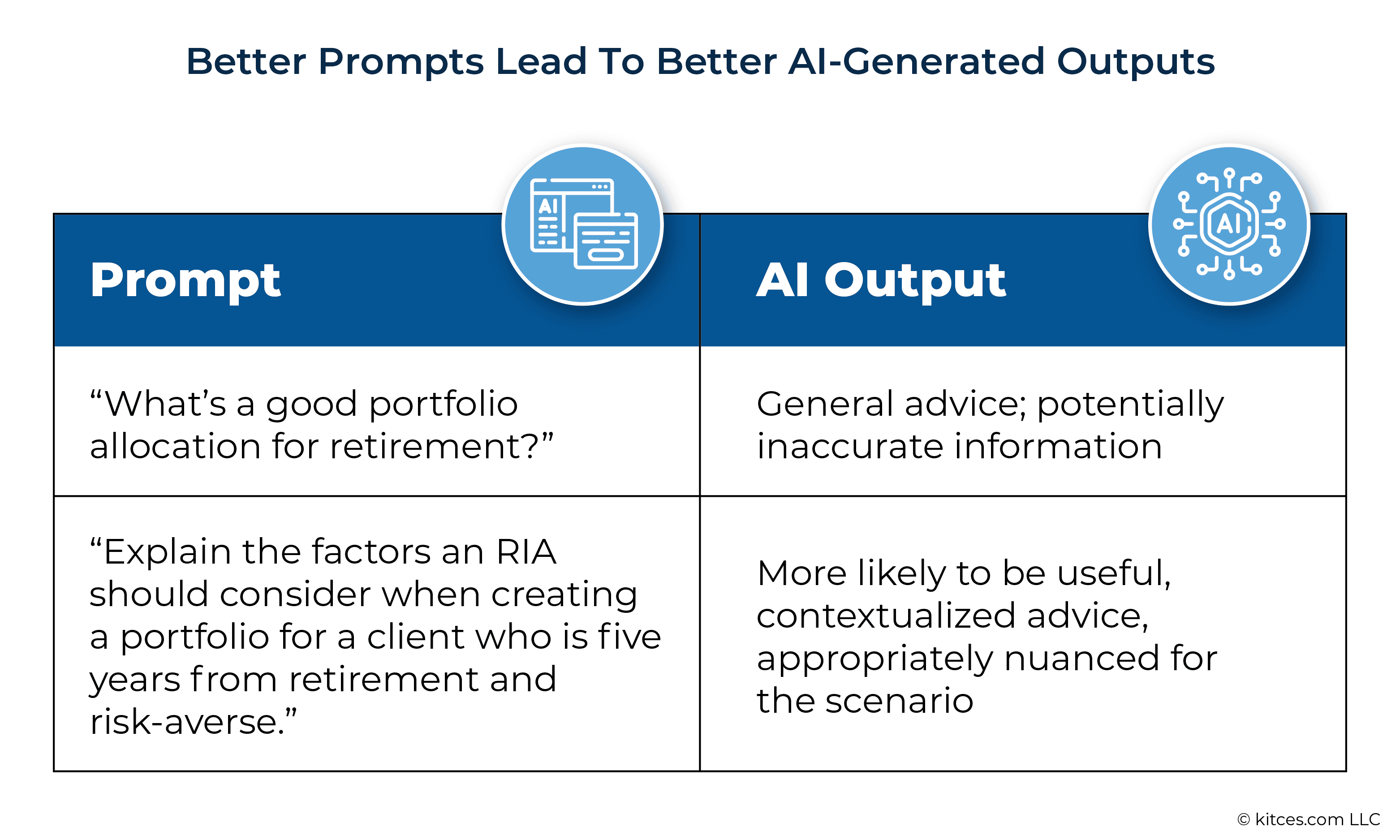

AI literacy: Basic understanding of how AI works and its limitations

Tool-specific instruction on firm systems

Compliance requirements when using AI

Prompt engineering for generative AI

Error recognition to identify hallucinations

Monitoring and Reporting

Compliance-ready AI requires ongoing oversight:

Usage dashboards tracking which systems are used, by whom, and how frequently

Performance metrics monitoring error rates and override frequency

Regulatory reporting preparation for SEC examinations

Board reporting on AI risk summaries

Client communications disclosing AI's role

The Path Forward

The SEC's Investor Advisory Committee December 2025 recommendations signal evolving expectations around AI disclosure, board oversight, and operational transparency.

Firms that build compliance into AI architecture from the start will move faster than those retrofitting governance onto deployed systems. The question isn't whether regulators will examine AI use—it's whether your systems will demonstrate the transparency, controls, and human oversight they're looking for.

Trustworthy advisory technology emerges from intentional design: systems built to augment fiduciary judgment while maintaining the transparency, accountability, and risk management that regulatory compliance demands.

Related

Get Started

Experience the full power of our SaaS platform with a risk-free trial. Join countless businesses who have already transformed their operations. No credit card required.

FAQs

How can this impact my business?

How long does an this take to implement?

Will we need to make changes in our teams?

Still have a question?

Get in touch with our team.